Validating Self-Reported Health Insurance Coverage: Preliminary Results on CPS and ACS

Validating Self-Reported Health Insurance Coverage: Preliminary Results on CPS and ACS

Many federal, state and private surveys include questions that measure health insurance coverage. Each survey has different origins, constraints and methodologies and, as a consequence, the surveys produce different estimates of coverage. While several factors could contribute to the variation in the estimates, research points to subtle differences in the questionnaires as driving much of this variation.

Validation studies that evaluate survey responses for individuals whose coverage is known from insurance plan records are rare. In September 2014, a number of researchers and sponsors from different agencies came together to launch the Comparing Health Insurance Measurement Error study. The goal of this study is to assess reporting accuracy in two major federal surveys, the Current Population Survey Annual Social and Economic Supplement (CPS ASEC) and the American Community Survey (ACS), by comparing survey reports of coverage to enrollment records from a private health plan. Individuals known to be enrolled in a range of different coverage types, including employer-sponsored insurance, nongroup coverage, qualified health plans from the marketplace and public coverage, were sampled.

Phone numbers associated with these individuals were then randomly assigned to one of two survey treatments, and a split panel telephone survey was conducted in the spring of 2015. Person-level matching was then conducted between the survey data and the enrollment records, and the accuracy of reported point-in-time health insurance coverage was established. My presentation at the 2016 American Association for Public Opinion Research annual conference covers reporting accuracy for public and private coverage, in both the CPS ASEC and ACS, and future research will explore reporting the accuracy of more detailed coverage types.

The line between public and private coverage is becoming increasingly blurry. For example, some states offer public programs that charge a premium while other states offer marketplace coverage (which is considered private) that is completely subsidized. In addition, the “no wrong door” marketplace encourages individuals to explore different plans and complete one application to determine eligibility for a range of coverage types, from completely subsidized Medicaid to unsubsidized marketplace coverage. These blurry lines make it increasingly difficult to capture coverage type in surveys. Our research suggests that no single data point is sufficient for categorizing coverage type but rather several data points are needed, including general sources of coverage (employer, government, direct purchase, etc.), and whether the coverage is (1) obtained on the marketplace, (2) has a premium and (3) whether the premium is subsidized.

Judgements must also be made as to how these data points should be pieced together to categorize coverage. For example, coverage reported to be obtained through the government, on the marketplace, with a subsidized premium could be subsidized marketplace coverage (i.e., private), or it could be Children’s Health Insurance Program (CHIP), Medicaid or another public program that requires enrollees to pay a premium. As a result, an algorithm converting these data points into an estimate of coverage type is needed.

Beginning in 2014, CPS ASEC was adapted with questions about the marketplace, premiums and subsidies. In the Comparing Health Insurance Measurement Error study, two algorithms were developed for categorizing public versus private coverage. The first algorithm, V1, was strictly conceptual and based on program eligibility rules, while the other, V2, was data driven, using a machine learning approach. ACS does not yet include these questions so coverage type was categorized based on answers to a standard “laundry list” of questions on coverage type (direct purchase, Medicaid, etc.), where the direct purchase category is assumed to capture marketplace coverage.

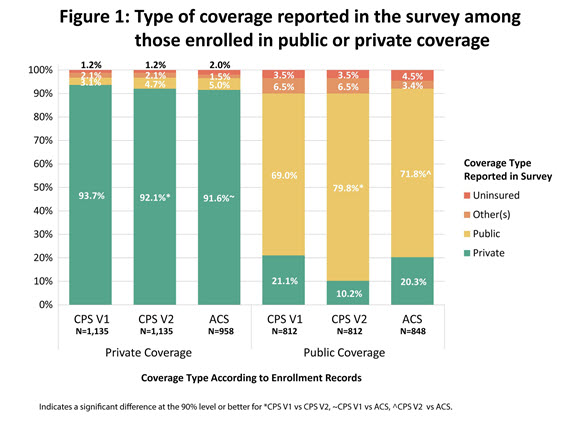

Two different metrics are used to evaluate the agreement between survey estimates of coverage type and the actual coverage type from enrollment records. First is underreporting (see Figure 1). Among those known to be enrolled in private coverage under the CPS ASEC/V1 treatment, 93.7 percent reported private coverage. This was slightly higher than both the CPS ASEC/V2 at 92.1 percent and the ACS at 91.6 percent. There was no significant difference between the CPS ASEC/V2 and the ACS estimates. Among those known to be enrolled in public coverage under the CPS ASEC/V2 treatment, 79.8 percent reported public coverage. This was significantly higher than both the ACS at 71.8 percent and the CPS ASEC/V1 at 68.96 percent. There was no significant difference between the CPS ASEC/V1 and the ACS.

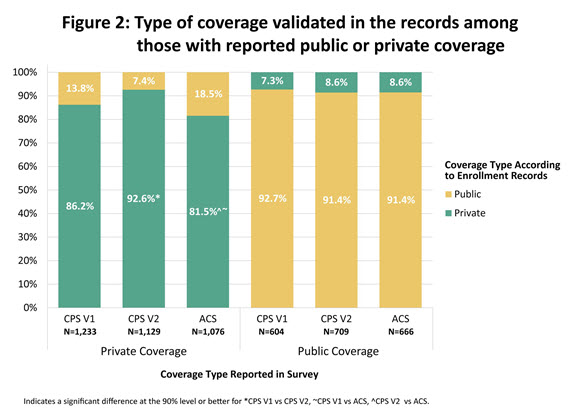

The other metric is the flip side of underreporting (see Figure 2). Among those who reported private coverage, the percent that could be validated in the enrollment records to have private coverage was highest in the CPS ASEC/V2 (92.6 percent), which was significantly higher than both the CPS ASEC/V1 at 86.2 percent, and the ACS at 81.5 percent. The 4.7 percentage point difference between the CPS ASEC/V1 and the ACS was also significant. Among those who reported public coverage, the percent that could be validated in the enrollment records to have public coverage was 92.7 percent in the CPS ASEC/V1 and 91.4 percent in both the CPS ASEC/V2 and the ACS, and no differences across surveys were significant. My presentation places these results in the context of past research and discusses the implications of the findings for questionnaire design.